Political Polls: Manufactured News - Part 2

A Look at the manipulative character of political polls

Steven A. Carlson

9 min read

Quick Review

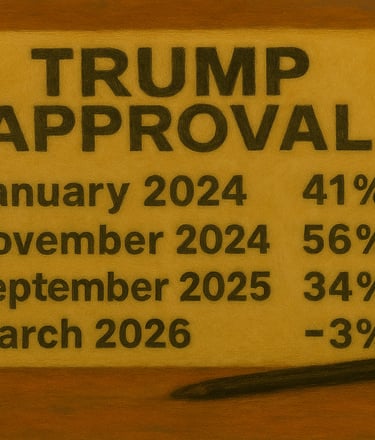

Part 1 of this essay discussed the relevance and significance of political polling. It was noted that political polling, particularly where a candidate’s standing is concerned, is not a news event. It is important that the public take into consideration the manufactured character of polling results that are often reported as significant events, even though that is not the case.

It is also important to keep in mind that due to the manufactured character of polls, the results are not necessarily accurate and can be vulnerable to manipulation. This can be seen in the wide gaps that appear when comparing the results of various polls. In Part 2, the source of disparate outcomes in polling is given serious consideration.

Poll Modeling

Polling modeling involves a number of factors. For instance, consider the Reuters/Ipsos poll in Part 1 of this series. The survey purportedly interviewed 1,282 individuals. According to the poll’s internal information, participants consisted of 350 Democrats, 402 Republicans, and 530 Independents. However, the modeling might not be that simple. It could be that 1,282 individuals were interviewed, but the breakdown was not 350, 402, and 530. In fact, it could well be that the numbers were 375 Democrats, 410 Republicans, and 497 Independents. However, the pollster may have had a model stating that the identity for those in the survey would be more accurately represented as 27% Democrat, 31% Republican, and 41% Independent. So the pollster may then adjust the numbers, extrapolating Democrat, Republican, and Independent answers across what they consider to be a better representation of the dynamics of the participants.

Note that this above example is not pointed at Reuters/Ipsos, since the modeling in this survey is unknown. It is provided purely as an example of how polling models are employed by various pollsters and says nothing about that particular pollster. Consequently, no one should take offense. This demonstrates, however, how modeling, whether accurate or inaccurate, can impact the outcome in any given poll. An inaccurate model may provide results that differ considerably from reality.

The Identity of Poll Sample

A contributing factor where a poll’s outcome is concerned can be found in the specific identity of those being surveyed (the poll sample). This is especially true of national polls. Some may wonder how the participants’ identities could be a factor since most people probably assume that participants are randomly selected. However, that is not always the case. Certain components in a polling methodology may impact who actually takes part in any given survey.

When: A major factor in determining the makeup of a survey’s participants can be found in the timing of the survey. Certain individuals will more likely be home during the daytime hours while others tend to be home in the evening. While the pollster may have no ill motive, it may happen in a particular survey that a significantly larger number of people are contacted during the daytime hours. That fact may influence responses since those individuals who respond during those hours may well have a different world view than those who are home in the evening. The pollster’s model must then take that information into consideration before making the numbers public.

There are other factors in relation to when a survey takes place that can influence the outcome. For instance, the responses in a poll taken during the week following the 9/11 attack on the Twin Towers may not sound like answers to those same questions weeks after the event. Many individuals wanted to jump into war immediately, but those same individuals undoubtedly tempered their responses as time went by.

Where: It is also true that the location of those being surveyed, especially in national polls, can weigh heavily. Are those being surveyed located in urban areas or rural areas? Urban dwellers tend to be more liberal than those who live in rural areas. Additionally, is there any part of the country that is over-surveyed? A survey where 80% of the participants live in California and New York will differ widely from a survey where half the participants live in South Dakota and Idaho. Of course, these representations must take into consideration population distribution, but when a survey focuses on the east coast or west coast of the United States, the responses will likely not be demonstrative of the general population.

Voter Status: A person’s voting record can play a significant part, not so much in the poll’s accuracy, but in its relevance. The polling world recognizes three different voter categories when taking surveys. First, there are polls of the general public. In such a survey, a person’s voting status is not taken into consideration. These polls are simply intended to draw information from the general population. Of course, a result is that many people who respond to the questions are clueless when it comes to the world of politics.

For instance, the Reuters/Ipsos poll mentioned above was a survey of the general public. The poll was taken over the 2-3 days following the U.S./Israel bombing of Iran on February 28th. However, in that survey, 70% of those interviewed knew little or nothing about the bombings. This demonstrates a lack of interest or attention among these individuals where current events are concerned. While perhaps one-third to one-half of those interviewed may participate in elections, this undoubtedly includes low-information voters who cast their ballot because someone is a Republican or because someone is a Democrat, following in their parents’ footsteps. Data from this kind of survey is not particularly useful since it does not really capture or impact the true American political landscape.

Second, there are polls of those who are reported as registered voters. This includes individuals who, in the interview process, respond that they are registered to vote. Their voting history is not taken into consideration. Only their status as a registered voter matters. Still, the fact that an individual has made the effort to register to vote suggests they may well be more interested in politics than the general public. While surveys of registered voters are not completely irrelevant, no one should put too much weight on the results of such a survey.

Finally, there are polls where the focus is on people who are likely voters. While many individuals may be interviewed, the published results tend to include responses from those most likely to cast a ballot during an election. Pollsters depend on responses to a number of questions to determine who among their respondents fall into that category.

Pollsters acknowledge as likely voters those who claim to have voted regularly in past elections, but they also listen to responses to assorted questions to determine a person’s level of knowledge. Likely voters tend to be more informed than the general public. Consequently, these are the polls that provide what may be considered relevant information, although even these polls often provide results that vary too widely to be taken seriously.

Where the identity of survey participants is concerned, a number of other factors are taken into consideration. These include the gender of the participant as well as things like party identification, race, proclaimed ideology (liberal, conservative), etc. Each of these help pollsters develop a model they hope will offer relevant information to their clients and to the public in general.

One final matter pertaining to poll participation is the sheer numeric scope of the survey. It should be obvious that the greater the participation, the more relevant a poll’s data will be. All of the polls listed in Part 1 of this article included anywhere from 1,000 participants (Daily Mail) to 2,264 participants (CBS News). When it comes to national surveys, it is not unreasonable to look only at polls with a minimum of 1,000 participants, which is but .0003% of the population while 2,264 comes out to just over .0006%. Anything less would require too high an m.o.e. to be considered useful. The same is not necessarily true of more localized polls that involve a state or municipality. However, even at the state level, a poll of .0005% of the population would seem to provide better information.

The Role of Wording in Poll Manipulation

The impact of the wording of questions used in political polls cannot be discounted. The construction of a question can significantly influence how respondents interpret and answer, creating a potential for bias in the results. Leading questions are one common technique that can influence responses. For example, a question may imply a negative viewpoint, likely leading a certain percentage of respondents to answer in a way that aligns with the phrasing of the question. The purpose is to direct the participant’s answers down a path that supports the desired results. Here are a couple examples of questions that should be considered leading question.

Are you concerned that America will lose 15 million jobs if ____________ is elected?

How do you view attempts to impede the government’s ability to _______________ from which citizens would

benefit greatly?

Additionally, the order in which questions are presented can compound the effects of wording. If a poll begins with emotionally charged queries regarding a specific political issue, subsequent questions might yield responses that align more with the emotions evoked earlier, rather than the respondents' true feelings. This phenomenon, known as priming, highlights how initial framing can skew the interpretation of later questions. Here are a couple of examples.

Would you be in favor of a relative going to war against Iran if you knew their life could be endangered?

Are you in favor of the U.S./Israel attack on the sovereign country of Iran?

Another method that is employed by pollsters to impact the outcome of a survey is the use of what are termed loaded questions. The objective is 1) to catch the survey participant off balance, forcing them to unwrap the wording of the question, 2) consider how they might answer and 3) derive a response that can be interpreted in a variety of ways. Here are a couple of examples

Are you disturbed by the administration’s failure to ___________________?

How many people do you know who were negatively impacted by Congress’s decision to _______________?

Ultimately, it is crucial for consumers of political polls to remain cautious and critical regarding the phrasing encountered. Recognizing the potential implications of wording can empower individuals to challenge dubious findings and promote a more informed and nuanced understanding of public opinion. By dissecting question structure and language, one can better ascertain the validity and reliability of polls, contributing to more transparent and honest discourse in the political landscape. Of course, pollsters obviously take advantage of the fact that very few members of the public bother digging into the questions and methodology in the polls; they simply view the results.

Making Contact

A relatively new issue that pollsters have faced over recent years involves the challenge of actually making contact with the public. There was a day when a polling company would hire 50 people to sit and make telephone calls for hours on end. In that process, they might catch between 10% and 20% of individuals who were home and would actually answer the phone and even fewer who were willing to take a political survey.

As time went on, with the advent of caller i.d., fewer and fewer people would answer the phone because they did not recognize the telephone number showing on the display. Finally came the cell phone and then the smart phone. While one would think this would make it easier to contact the public, since they carry phones with them at all times, very few people answer a call from an unfamiliar number. The thought is that if it is an important matter, the caller will simply leave a message. However, pollsters generally do not leave messages.

The methodology polling involves has been heavily impacted over these past few years. Often, rather than constant phone calls, many pollsters have changed their methods. Some pollsters now use online platforms and mobile apps to collect their data. Additionally, text messaging and address-recruited panels have developed into regular methods of polling. Certainly the polling industry is changing and will continue to change over the coming years as technology advances. Only time will tell if advancement in technology will eventually offer more accurate polling results.

It is an undeniable fact that poll results – especially where political polls are concerned – are often skewed. In Parts 1 & 2 of this essay, examples and methods of polling bias have been given serious consideration. Due to the extraordinary length of this article, it has been broken into three parts. However, you will not want to miss out on Part 3 where the primary focus will be on poll bias and poll manipulation. Please be sure to return for Part 3 where the why of polling bias will be discussed. After all, the why in any decision is always important.

End Part 2

------------------------------------------------------------------------------If you enjoyed this article, please encourage your friends to visit us here at constitutionmatters.net where the Constitution really does matter.

See below for contact information

This book will truly enhance your understanding of the Declaration of Independence and the United States Constitution. Click the button below to check it out.

___________________________________________________________________________________________________________________________________________________________________________________________________

Contact

Questions? Reach out anytime.

Email:

contact@constitutionmatters.net

© 2025. All rights reserved.